Everyone is creative, by nature. Just unsuppress it by following Albert Bandura’s ‘Guided Mastery’.

Category: creative

Text below is a raw dump of the notes I took while watching the talk.

Formal Theory of Fun & Creativity, Jürgen Schmidhuber

More on:

- [http://www.idsia.ch/~juergen/beauty.html Theory of Beauty » Facial Attractivness & Low-Complexity Art]

- Google Search » Artificial Curiosity

[03:35]

Novel patterns: sounds like a paradox to me.

To compute Rint, two questions:

- What is a pattern? Data that one can compress: raw data expressible by less data.

- When is it novel? Degree of novelty = number of saved bits = Fun = Rint

Subjectively novel if compressor is able to improve through learning because of a previously unknown regularity.

E.g. the fractal formula that describes broccoli because it’s self-similar. A small piece of code computes into something elaborate.

There are zillions of patterns already known, so not novel, therefore not rewarding. not fun.

So, how do you recognize a novel pattern? By recognizing that the compression algorithm you use to encode or compress is not constant, not the same all the time, but it improves through learning. In this way, it becomes a better compressor.

E.g. saving three bits by an improved compression by learning is your intrinsic reward, Rint.

You want to maximize Rint.

Science, art, and humor are driven by Rint for finding and creating novel patterns.

Physics: create experimental data obeying a previously unknown law, some pattern that you previously did not know. E.g. discovering the law of gravity by ten falling apples. The law of gravity is an elegant piece of code or pattern describing any falling object in a very terse way. [Boy, what fun.]

Music: find or create regular melody and rhythm not exactly like the others. Find something unusual but regular, known but new. [Again, the paradox shows up. Seems like Fun & Creativity have to do with paradoxes (and koans?).] So, it’s not random, but familiar and new.

Same in visual arts. Visual arts is about novel patterns. Make regular but non-trivial patterns.

[09:20]

To build a creative agent that never stops generating non-trivial & novel & surprising data, we need two learning modules:

- an adaptive predictor or compressor or model of the growing data history as the agent is interacting with its environment, and

- a general reinforcement learner.

The LEARNING PROGRESS of (1) is the FUN or intrinsic reward of (2). That is, (2) is motivated to invent interesting things that (1) does not yet know but can easily learn. To maximize expected reward, in the absence of external reward (2) will create more and more complex behaviors that yield temporarily surprising (but eventually boring) patterns that make (1) quickly improve. We discuss how this principle explains science & art & music & humor, and how to scale up previous toy implementations of the theory since 1991, using recent powerful methods for (1) prediction and (2) reinforcement learning.

Two learning modules:

- A learning compressor of the growing history of actions and inputs. Compression progress == Fun == Surprise.

- A reward or maximizer or reinforcement learner to select actions maximizing Expected Fun == Rint (+ Rext).

Whatever you can predict, you can compress.

B(X, O(t)) = –#bits needed by O’s compressor to quickly (in C steps) encode data (MDL: Minimum Description Length).

O’s compressor improves at t: Rint(X, O(t)) ~ B(X, O(t)) – B(X, O(t – 1)) = Fun =Reward for Actor.

When things (suddenly) become better explainable, expressable, compressible (needing fewer bits to encode the same thing than before), you’re having fun and create art.

You are after the first derivative: the change or delta end the compressibility.

[16:15]

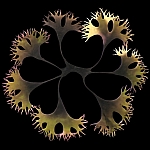

Self-similar ‘femme fractale’ based on a very simple arc-drawing program.

Draw a recursive set of circles, and remove circles or arcs until you are left with a pattern that you already know, i.e. that makes sense to you, that has meaning in your realm or world.

Recursive application of harmonic proportions.

Create a graphical structure through Fibonacci rations nd the Golden or Divine Proportion.

Many artists claim the human eye prefers this ratio over others.

The ratios of subsequent Fibonacci numers are: 1/1, 1/2, 2/3, 3/5, 5/8, 8/13, 13/21… converge to the proportion ½ + ½√5 ≈ 1.609344… such that a/b = (a+b)/a.

Jokes (are very simple).

Every joke has a punch line—unexpected if you don’t already know the joke.

If you don’t know the joke, you need extra bits to predict the thing.

So, you predict something, but it’s not what you predicted, so you need extra bits to store the stuff that you did not predict well.

However, all the jokes are designed to relate the punch line back to the previous story in a way that human learning algorithms easy pick out. So the human learning algorithm kicks in and finds out that the punch line is related to the previous story (history) in a way that allow you to save bits, because all you need to do is to insert a pointer to the previous part of the story such that you can get rid of the extra bits that you didn’t know and didn’t expect and save bits! # of saved bits is Rint, the fun bit, the reward. That is what you are trying to maximize.

What reinforcement algorithms do is try generate more data which also has the property that it is fun.

Learning from previous things to predict what other things in the future might be possible which may not be novel anymore after that.

Recently I gave this talk to the king of Spain. Or he said he was the king of Spain. He said, if you are a scientist, then I am the king of Spain.

For artificial scientist, or artists: Which algorithms do we use for compressing the growing data history? Which actor/reinforcement learning algorithms and data selectors do we use to experiment and invent the future?

Learning algorithms (predictors of novel patterns) improve all the time. They constantly get better at getting better.

[22:00]

Whatever you can predict well, you don’t have to store, so you can compress it.

Likewise, what you cannot predict well, needs extra storage space.

So learning or prediction progress creates intrinsic rewards (fun!).

This sometimes helps speed up external rewards as well.

Neural nets are underestimated! Our fast deep nets just broke old handwritten digit recognition world records.

Deep neural nets with many layers, pre-trained. [software is executable knowledge, wisdom even]

You retrain these nets under supervision, but all of this is unnecessary.

Dan Ciresan, Üli Meier and Luca Gambardella are working on swarm intelligence, deep layers, etc.

No unsupervised training is necessary to achieve these results.

Resilient machines, robots, learn, update world model based on failed expectations, optimize, plan in model, execute. Continual self-modeling by Alex Förster. Damaged robot cannot drive anymore, learns neural self-model to detect this and self-heals.

Feed forward networks will probably not be good at compressing history.

You can use Recurrent Neural Networks to fix that.

RNNs are good at minimizing the difference between what you can predict and what actually happens. You can use them as time series predictors.

Then you can create a gradient program or algorithm space, you minimize, you compute the first derivative of your wish.

You get a space of programs [with RNNs] of which you can select a better program to predict the future so your wish may come true.

Alex Graves is now beating all connected handwriting recognition competitions using LSTM-RNNs.

No pre-segmented data; RNN maximise probability of training set labels. Much better than single digit recognition.

Alex also won all the Arabic handwriting recognition contests, despite the fact that he doesn’t understand Arabic.

RNNs capture patterns (frequently occurring combinations) to predict future events and self-correct them.

Use these patterns again and again to predict based on different input sequences.

Symbols and self-symbols are just natural by-products of historical compression. Symbolic computation.

Frequent observations are best—also neurally— encoded by storing prototypes.

One thing is experienced again and again as the agent interacts with the world: the agent itself.

Rint = Measure the relative entropy between priors and posteriors of 1) before and after learning.

Why are you looking here rather than there? Because here is some learning opportunity that you don’t find there. This is used to much improve eye-movement predictions.

I bet that at the end of program “while not condition do begin … end” the contents of adderss 23721 will be 5.

2002: left & right brain symmetric experiments: prediction of the outcome of experiments.

Both left and right brain can predict the outcome and disagree. Once the agree to disagree, they can run the experiment. They can bet, and one will be the—surprised—loser and one will be the winner. Winner sometimes gets copied over loser. The loser has to pay the winner.

One has a better model than the other.

The intrinsic reward is essentially moving form one guy to the other.

The sum of intrinsic rewards is always zero—what one gains, the other loses.

Each one is maximally motivated to create data that comes up with an experiment that the other finds attractive to the other but where both predict a different outcome.

If they both predict the same result, they are not interested in the experiment because there is nothing to learn, there is no fun.

Only when the predictions differ, you have the incentive to run the experiment, in order to have fun and learn.

That’s why the increase their repertoire of pluralistic programs, learning and building on the previously learned repertoire [and have fun!]

[So, different opinions are actually very valuable! They hold the option to create something new, to innovate, and to progress while having fun, provided you agree to disagree and do the experiment (trial run or proef op de som).

Just like a little baby that focusses on tiny bits to make progress.

The fun of improving motor skills. Why should anyone learn juggling? Learning motor skills is also driven by the desire to create novel patterns. Juggling is fun because it is regular and ypu get a lot of reward because it leads to a novel way of behaving and saves bits and adds to your existing patterns.

[Patterns == behavior; novel behavior → change; change is fun!]

Intrinsically motivated cumulatively learning robots.

Pieter Adriaans: beauty not very compressible, not incompressible, but somewhere in between.

Pleasingness is dependent on the observer: the amount of compression (the first derivative) is a measure for pleasure. That is why some things are pleasurable for some but not for others. It’s an inverse hyperbolic curve with a maximum. The maximum is (personally) subjective. And it is a dynamic thing—what once was interesting and fun, becomes standard and boring; you evolve.

The theory of humor is also based on unexpectedness. Why is something funny? Because it is unexpected, and you find a quick way to incorporate it as a new pattern, a new way of doing things. The fun part is in the progress of learning. Humor takes something that is incompressible at first, but then recognizes its compressibility and learns that into a new habit or trait. You save the bits. So the first derivative is the crucial thin.g It’s not the compressibility itself. It’s the dynamic process that leads to learning before and after compressing.

[So, life is a constant stream of novel patterns you experience. Life is a pattern generator, where a new pattern is only incrementally different from previous patterns.]

Q: So, why is everone still going to the Louvre to see Mona Lisa while you can see the same picture over and over again on the web?

A: Because the sensory experience of actually going to the Louvre creates a whole stream of novel patterns.

[This is why re-reading a book after a few years is also fun: your paradigm has changed, and therefore re-reading a book creates novel patterns.]

Prachtig interview met [http://aardnoot.nl/Amit_Gosmwami Amit Goswami] over [http://www.wie.org/j11/goswami.asp Scientific Proof of the Existence of God]. Voor mij is de ”’essentie”’ nog steeds:

:Consciousness matters™—and that’s all that matters

En dat kan je dus ”’heel letterlijk”’ nemen. Zelfs het woord ”heel” uit de vorige zin krijgt daardoor voor mij meer waarde.

De Amerikaanse National Science Foundation verzamelt met behulp van een jaarlijks wedloop [http://nsf.gov/news/special_reports/scivis/ prachtig wetenschappelijk beeldmateriaal].

De Amerikaanse National Science Foundation verzamelt met behulp van een jaarlijks wedloop [http://nsf.gov/news/special_reports/scivis/ prachtig wetenschappelijk beeldmateriaal].

Stumbled across a wonderful website yesterday with a lot of awseome and open source Flash applications that produce wonderful graphics, many of them fractal, complex. [http://levitated.net/ Check out Levitated.net]—a friendly company.

De term ‘planning’ heeft behoorlijk verschillende betekenissen voor iedereen. Menig mens heeft er bijna een trauma aan overgehouden—met name van de strakke, dogmatische, volg-de-planning-of-ik-schiet-versie die met ijzeren hand gevolgd dient te worden.

Tijd dus om ‘planning’ nieuw leven in te blazen en te ontdoen van haar negatieve lading.

Voor mij betekent planning niets meer en niets minder dan:

”’Planning”’—Het aanbrengen van precies voldoende spanning om het geheel tegelijkertijd een constructieve richting te geven én voldoende vrije ruimte voor creativiteit en persoonlijke inbreng.

In Japan noemen ze dat [[http://wiki.aardrock.com/Hoshin_Kanri|Hoshin Kanri]]—”’hoshin”’ betekent kompas en geeft richting; ”’kanri”’ betekent management of controle.

Dus een lange termijn visie gecombineerd met wendbaar ontwikkelen onder voortdurend voortschrijdend inzicht zodat je continu bij kunt sturen. Want zelfs de veranderingen veranderen.

Zodra je heldere afspraken maakt over het te volgen ”’proces”’, haar uitkomst in de vorm van een product en de ontwikkeling van beiden, kan je echt álles maken en past het geheel zich voortdurend aan veranderende omstandigheden aan.

Graaf dieper op [[http://wiki.aardrock.com/Wat_Werkt_Wel|wat werkt wel]] en [[http://wiki.aardrock.com/Risicoloos_resultaat_en_rendement|risicoloos resultaat en rendement]].

Worker Owned and Operated

telekommunisten is controlled by it’s workers and committed to staying that way, we believe we can serve our customers best and at the lowest cost by remaining focused on meeting the needs of our employees and customers, not on profits for outside shareholders. Being worker-owned means that all the money you spend on our products goes directly to the maintenance and improvement of the service you receive.

Venture Communism

Venture Communism is a project to research, experiment with, and initiate social enterprises, particularly a rent-sharing based proposed form called a Venture Commune. The project also encourages dialogue and investigation of alternative economies and workers struggle against private property .

The goal is to promote understanding of the relationship between distribution of productive assets, political power and global exploitation and poverty and to investigate how worker-controlled enterprises can contribute to solving this problem, as well as to put such ideas into practise.

Several articles have been written and many on-line correspondences sent describing and discussing venture communism, as well as analyzing the nature of property generally.

Source: [[http://www.telekommunisten.net/about Telekommunisten]]—”’The Revolution is Calling”’.

Wonderful rebuttal by Deepak Chopra: [http://www.intentblog.com/archives/2007/09/deepaks_article.html The Science Delusion? Review of Richard Dawkins: The God Delusion]. Materialism vs. spiritualism and God. And resonating with [[Einstein’s God, or The Hopes for Secular Spirituality]].

Thank you Richard Dawkins, for writing your “The God Delusion” for it creates enormous attention for this ever important subject. You make people like Chopra and myself dig deeper into consiousness, the universe, quantum physics, God, Allah, Buddha and more. We’re becoming more conscious and knowledgeable about it.

And it’s about time, since we’re rapidly evolving towards the ”'[http://en.wikipedia.org/wiki/Noosphere noosphere]”’, catalyzed by the [http://www.amazon.com/Time-Technosphere-Law-Human-Affairs/dp/1879181991 technosphere] with the Internet as its most brilliant example at this time.

Spaceship Earth is entering its most risky trajectory when [http://en.wikipedia.org/wiki/Metamorphosis metamorphosing] to its next order—extreme [http://en.wikipedia.org/wiki/Emergence emergence]. And just like the life-threatening phase during the metamorphosis from caterpillar to butterfly, albeit on a very large, global, scale.

”’May we all evolve into a global, and perhaps universal wisdom and happiness”’. And beware, these massive scale changes or ”’emergence”’ happens relatively abrupt. Buckle on.

[http://www.crowdsourcingdirectory.com/?p=57 How to create Hot Crowds]: Hot Groups are high performing teams with six characteristics:

#Hot teams are highly dedicated to end results and are enthusiastic

#They thrive on ridiculous deadlines and high hurdles to take

#They are irreverent and non-hierarchical, playing around and having fun

#They are made up of widely divergent disciplines and abilities

#They use an open and eclectic workspace

#Hot teams connect to the outside world and look for solutions outside themselves

(Via [http://www.crowdsourcingdirectory.com CrowdsourcingDirectory].)

The Artist’s Way

‘k Las er gisteren over in [http://www.zinboek.nl/weblog/2007/07/09/mijn-creativiteitsmonster Mijn creativiteitsmonster] van [http://www.zinboek.nl/weblog André Meiresonne]: ”'[http://www.theartistsway.com/ The Artist’s Way]—A Spiritual Path to Higher Creativity”’ van [http://en.wikipedia.org/wiki/Julia_Cameron Julia Cameron]. Kan ik vast veel van leren. Ook mee op vakantie. Ook bij [http://www.mystiek.nl/ Mystiek] gekocht. Engelstalig. €17.55.

‘k Las er gisteren over in [http://www.zinboek.nl/weblog/2007/07/09/mijn-creativiteitsmonster Mijn creativiteitsmonster] van [http://www.zinboek.nl/weblog André Meiresonne]: ”'[http://www.theartistsway.com/ The Artist’s Way]—A Spiritual Path to Higher Creativity”’ van [http://en.wikipedia.org/wiki/Julia_Cameron Julia Cameron]. Kan ik vast veel van leren. Ook mee op vakantie. Ook bij [http://www.mystiek.nl/ Mystiek] gekocht. Engelstalig. €17.55.

[http://weblog.oomph.nl/ Sanne Roemen] en [http://danalog.nl Daan Kortenbach] doen een oproep om een [http://www.naamlooz.nl/2007/07/briljante-progr.html coöp van ”’briljante programmeurs”’] op te zetten. Wat mij betreft breiden ze dat uit naar ”’briljante ontwerpers, designers, architecten en projectleiders”’. En dan lekker agile aan de slag.

Als we dat nou eens naadloos kunnen samenvoegen met grote bedrijven op dit gebied. Een organisatievorm vinden waarbij beiden elkaar versterken. De ”’groten”’ zijn ”’zeldzaam, veerkrachtig, geworteld en enorm”’. De ”’eenpitters”’, tweepitters en gasstellen zijn met ”’velen, klein, wendbaar en innovatief”’. Hoe kunnen ze elkaar versterken?!

Hey Sanne, Daan, ”’Ik wil meedoen!”’ Gaan we in augsutus om de tafel om dit verder vorm te geven?

Mijn gedicht “[http://wiki.aardrock.com/Wanneer_dan Wanneer Dan]” vat het geheel samen.

Just one day after my regestration at [[Cambrian House, Home of Crowdsourcing]], Carl at [http://creativecrowds.com Creative Crowds] tipped me on the [http://www.crowdsourcingdirectory.com/ Crowdsourcing Directory].

Uhmpf… awful lot of creative crowd work going on. A sheer infinite flurry of innovation sources. Howcome I only see this now? Wonderful! Diving into it because I will find the fertile ground where my, sometimes wild and big ideas, are valued and will flourish.

Thanks for the pointer Carl!

P.S. Appears that Carl sends email from the [http://innovationfactory.nl/ Innovation Factory]. Another interesting company that I only now discoverd.

P.S. 2 Seems they’re working hard to realize [http://creativecrowds.com Creative Crowds] and [http://creativecapitals.org/ Creative Capitals]